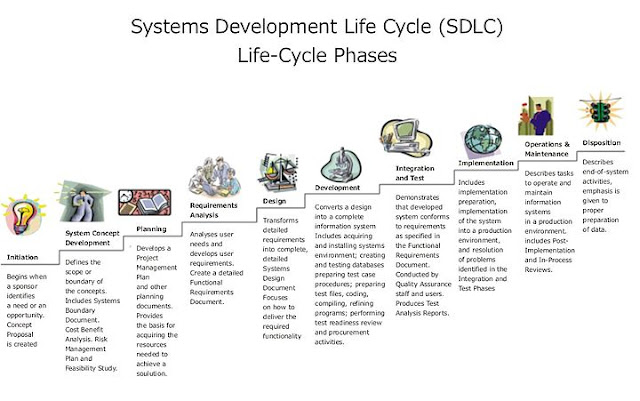

The Systems Development Life Cycle (SDLC) is a conceptual model used in project management that describes the stages involved in an information system development project from an initial feasibility study through maintenance of the completed application.

Various SDLC methodologies have been developed to guide the processes involved including the waterfall model (the original SDLC method), rapid application development (RAD), joint application development (JAD), the fountain model and the spiral model. Mostly, several models are combined into some sort of hybrid methodology. Documentation is crucial regardless of the type of model chosen or devised for any application, and is usually done in parallel with the development process. Some methods work better for specific types of projects, but in the final analysis, the most important factor for the success of a project may be how closely particular plan was followed.

A Requirements phase, in which the requirements for the software are gathered and analyzed. Iteration should eventually result in a requirements phase that produces a complete and final specification of requirements. - A Design

phase, in which a software solution to meet the requirements is designed. This may be a new design, or an extension of an earlier design.

- An Implementation and Test phase, when the software is coded, integrated and tested.

- A Review phase, in which the software is evaluated, the current requirements are reviewed, and changes and additions to requirements proposed.

For each cycle of the model, a decision has to be made as to whether the software produced by the cycle will be discarded, or kept as a starting point for the next cycle (sometimes referred to as incremental prototyping). Eventually a point will be reached where the requirements are complete and the software can be delivered, or it becomes impossible to enhance the software as required, and a fresh start has to be made.

The iterative lifecycle model can be likened to producing software by successive approximation. Drawing an analogy with mathematical methods that use successive approximation to arrive at a final solution, the benefit of such methods depends on how rapidly they converge on a solution.

- An Implementation and Test phase, when the software is coded, integrated and tested.

- A Review phase, in which the software is evaluated, the current requirements are reviewed, and changes and additions to requirements proposed.

For each cycle of the model, a decision has to be made as to whether the software produced by the cycle will be discarded, or kept as a starting point for the next cycle (sometimes referred to as incremental prototyping). Eventually a point will be reached where the requirements are complete and the software can be delivered, or it becomes impossible to enhance the software as required, and a fresh start has to be made.

The iterative lifecycle model can be likened to producing software by successive approximation. Drawing an analogy with mathematical methods that use successive approximation to arrive at a final solution, the benefit of such methods depends on how rapidly they converge on a solution.

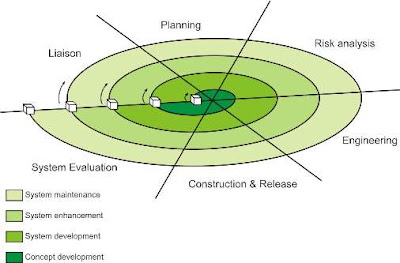

3.Spiral model:

This model proposed by Barry Bohem in 1988, attempts to combine the strengths of various models. It incorporates the elements of the prototype driven approach along with the classic software life cycle. Is also takes into account the risk assessment whose outcome determines taking up the next phase of the designing activity.

Unlike all other models which view designing as a linear process, this model views it as a spiral process. This is done by representing iterative designing cycles as an expanding spiral.

Typically the inner cycles represent the early phase of requirement analysis along with prototyping to refine the requirement definition, and the outer spirals are progressively representative of the classic software designing life cycle.

At every spiral there is a risk assessment phase to evaluate the designing efforts and the associated risk involved for that particular iteration. At the end of each spiral there is a review phase so that the current spiral can be reviewed and the next phase can be planned.

Six major activities of each designing spirals are represented by six major tasks:

1. Customer Communication

2. Planning

3. Risk Analysis

4. Software Designing Engineering

5. Construction and Release

6. Customer Evolution

1. Customer Communication

2. Planning

3. Risk Analysis

4. Software Designing Engineering

5. Construction and Release

6. Customer Evolution

Advantages

1. It facilities high amount of risk analysis.

2. This software designing model is more suitable for designing and managing large software projects.

3. The software is produced early in the software life cycle.

Disadvantages

2. This software designing model is more suitable for designing and managing large software projects.

3. The software is produced early in the software life cycle.

Disadvantages

1. Risk analysis requires high expertise.

2. It is costly model to use

3. Not suitable for smaller projects.

4. There is a lack of explicit process guidance in determining objectives, constraints and alternatives..

5. This model is relatively new. It does not have many practitioners unlike the waterfall model or prototyping model.

2. It is costly model to use

3. Not suitable for smaller projects.

4. There is a lack of explicit process guidance in determining objectives, constraints and alternatives..

5. This model is relatively new. It does not have many practitioners unlike the waterfall model or prototyping model.

4.Proto type model:Prototyping is a technique that provides a reduced functionality or limited performance version of the eventual software to be delivered to the user in the early stages of the software development process. If used judiciously, this approach helps to solidify user requirements earlier, thereby making the waterfall approach more effective.

What is done is that before proceeding with design and coding, a throw away prototype is built to give user a feel of the system. The development of the software prototype also involves design and coding, but this is not done in a formal manner. The user interacts with the prototype as he would do with the eventual system and would therefore be in a better position to specify his requirements in a more detailed manner. The iterations occur to refine the prototype to satisfy the needs of the user, while at the same time enabling the developer to better understand what needs to be done.

Disadvantages

1. In prototyping, as the prototype has to be discarded, so might argue that the cost involved is higher.

2. At times, while designing a prototype, the approach adopted is “quick and dirty” with the focus on quick development rather than quality.

3. The developer often makes implementation compromises in order to get a prototype working quickly.

2. At times, while designing a prototype, the approach adopted is “quick and dirty” with the focus on quick development rather than quality.

3. The developer often makes implementation compromises in order to get a prototype working quickly.

4.RAD model(Rapid application development):

The RAD modelis a linear sequential software development process that emphasizes an extremely short development cycle. The RAD model is a "high speed" adaptation of the linear sequential model in which rapid development is achieved by using a component-based construction approach. Used primarily for information systems applications, the RAD approach encompasses the following phases:

A. Business modeling

The information flow among business functions is modeled in a way that answers the following questions:

What information drives the business process?

What information is generated?

Who generates it?

Where does the information go?

Who processes it?

The information flow among business functions is modeled in a way that answers the following questions:

What information drives the business process?

What information is generated?

Who generates it?

Where does the information go?

Who processes it?

B. Data modeling

The information flow defined as part of the business modeling phase is refined into a set of data objects that are needed to support the business. The characteristic (called attributes) of each object is identified and the relationships between these objects are defined.

The information flow defined as part of the business modeling phase is refined into a set of data objects that are needed to support the business. The characteristic (called attributes) of each object is identified and the relationships between these objects are defined.

C. Process modeling

The data objects defined in the data-modeling phase are transformed to achieve the information flow necessary to implement a business function. Processing the descriptions are created for adding, modifying, deleting, or retrieving a data object.

The data objects defined in the data-modeling phase are transformed to achieve the information flow necessary to implement a business function. Processing the descriptions are created for adding, modifying, deleting, or retrieving a data object.

D. Application generation

The RAD model assumes the use of the RAD tools like VB, VC++, Delphi etc... rather than creating software using conventional third generation programming languages. The RAD model works to reuse existing program components (when possible) or create reusable components (when necessary). In all cases, automated tools are used to facilitate construction of the software.

The RAD model assumes the use of the RAD tools like VB, VC++, Delphi etc... rather than creating software using conventional third generation programming languages. The RAD model works to reuse existing program components (when possible) or create reusable components (when necessary). In all cases, automated tools are used to facilitate construction of the software.

E. Testing and turnover

Since the RAD process emphasizes reuse, many of the program components have already been tested. This minimizes the testing and development time.

6.Cocomo model:cost to cost model:

The Constructive Cost Model (COCOMO) is an algorithmic software cost estimation model developed by Barry Boehm. The model uses a basic regression formula, with parameters that are derived from historical project data and current project characteristics.

COCOMO consists of a hierarchy of three increasingly detailed and accurate forms. The first level, Basic COCOMO is good for quick, early, rough order of magnitude estimates of software costs, but its accuracy is limited due to its lack of factors to account for difference in project attributes (Cost Drivers). Intermediate COCOMO takes these Cost Drivers into account and Detailed COCOMO additionally accounts for the influence of individual project phases.

1.Basic COCOMO:

Basic COCOMO computes software development effort (and cost) as a function of program size. Program size is expressed in estimated thousands of lines of code (KLOC)COCOMO applies to three classes of software projects:

- Organic projects - "small" teams with "good" experience working with "less than rigid" requirements

- Semi-detached projects - "medium" teams with mixed experience working with a mix of rigid and less than rigid requirements

- Embedded projects - developed within a set of "tight" constraints (hardware, software, operational, ......)

- Effort Applied (E) = ab(KLOC)bb [ man-months ]

- Development Time (D) = cb(Effort Applied)db [months]

- People required (P) = Effort Applied / Development Time [count]

| Software project | ab | bb | cb | db |

|---|---|---|---|---|

| Organic | 2.4 | 1.05 | 2.5 | 0.38 |

| Semi-detached | 3.0 | 1.12 | 2.5 | 0.35 |

| Embedded | 3.6 | 1.20 | 2.5 | 0.32 |

Basic COCOMO is good for quick estimate of software costs. However it does not account for differences in hardware constraints, personnel quality and experience, use of modern tools and techniques, and so on.

b.Intermediate COCOMOs :

Intermediate COCOMO computes software development effort as function of program size and a set of "cost drivers" that include subjective assessment of product, hardware, personnel and project attributes. This extension considers a set of four "cost drivers",each with a number of subsidiary attributes:-

- Product attributes

- Required software reliability

- Size of application database

- Complexity of the product

- Hardware attributes

- Run-time performance constraints

- Memory constraints

- Volatility of the virtual machine environment

- Required turnabout time

- Personnel attributes

- Analyst capability

- Software engineering capability

- Applications experience

- Virtual machine experience

- Programming language experience

- Project attributes

- Use of software tools

- Application of software engineering methods

- Required development schedule

The Intermediate Cocomo formula now takes the form:

- E=ai(KLoC)(bi).EAF

-

Software project ai bi Organic 3.2 1.05 Semi-detached 3.0 1.12 Embedded 2.8 1.20

c.Detailed COCOMO:

Detailed COCOMO incorporates all characteristics of the intermediate version with an assessment of the cost driver's impact on each step (analysis, design, etc.) of the software engineering process.

The detailed model uses different efforts multipliers for each cost drivers attribute these Phase Sensitive effort multipliers are each to determine the amount of effort required to complete each phase.

In detailed COCOMO, the effort is calculated as function of program size and a set of cost drivers given according to each phase of software life cycle.

The five phases of detailed COCOMO are:-

- plan and requirement.

- system design.

- detailed design.

- module code and test.

- integration and test.

7.v-model:

The V-Model is a software development model designed to simplify the understanding of the complexity associated with developing systems. The V-model consists of a number of phases. The Verification Phases are on the left hand side of the V, the Coding Phase is at the bottom of the V and the Validation Phases are on the right hand side of the V.

Requirements analysis:

In the Requirements analysis phase, the requirements of the proposed system are collected by analyzing the needs of the user(s). This phase is concerned about establishing what the ideal system has to perform. However it does not determine how the software will be designed or built. Usually, the users are interviewed and a document called the user requirements document is generated.

In the Requirements analysis phase, the requirements of the proposed system are collected by analyzing the needs of the user(s). This phase is concerned about establishing what the ideal system has to perform. However it does not determine how the software will be designed or built. Usually, the users are interviewed and a document called the user requirements document is generated.

The user requirements document will typically describe the system’s functional, physical,interface, performance, data, security requirements etc as expected by the user. It is one which the business analysts use to communicate their understanding of the system back to the users. The users carefully review this document as this document would serve as the guideline for the system designers in the system design phase. The user acceptance

tests are designed in this phase.

tests are designed in this phase.

System Design:

Systems design is the phase where system engineers analyze and understand the business of the proposed system by studying the user requirements document. They figure out possibilities and techniques by which the user requirements can be implemented. If any of the requirements are not feasible, the user is informed of the issue. A resolution is found and the user requirement document is edited accordingly.

Systems design is the phase where system engineers analyze and understand the business of the proposed system by studying the user requirements document. They figure out possibilities and techniques by which the user requirements can be implemented. If any of the requirements are not feasible, the user is informed of the issue. A resolution is found and the user requirement document is edited accordingly.

The software specification document which serves as a blueprint for the development phase is generated. This document contains the general system organization, menu structures, data structures etc. It may also hold example business scenarios, sample windows, reports for the better understanding. Other technical documentation like entity diagrams, data dictionary will also be produced in this phase. The documents for system

testing is prepared in this phase.

testing is prepared in this phase.

Architecture Design:

The phase of the design of computer architecture and software architecture can also be referred to as high-level design. The baseline in selecting the architecture is that it should realize all which typically consists of the list of modules, brief functionality of each module, their interface relationships, dependencies, database tables, architecture diagrams, technology details etc. The integration testing design is carried out in this

phase.

The phase of the design of computer architecture and software architecture can also be referred to as high-level design. The baseline in selecting the architecture is that it should realize all which typically consists of the list of modules, brief functionality of each module, their interface relationships, dependencies, database tables, architecture diagrams, technology details etc. The integration testing design is carried out in this

phase.

Module Design:

The module design phase can also be referred to as low-level design. The designed system is broken up into smaller units or modules and each of them is explained so that the programmer can start coding directly. The low level design document or program specifications will contain a detailed functional logic of the module, in pseudo code -database tables, with all elements, including their type and size. The unit test design is developed in this stage.

The module design phase can also be referred to as low-level design. The designed system is broken up into smaller units or modules and each of them is explained so that the programmer can start coding directly. The low level design document or program specifications will contain a detailed functional logic of the module, in pseudo code -database tables, with all elements, including their type and size. The unit test design is developed in this stage.

Advantages of V-model

- It saves ample amount of time and since the testing team is involved early on, they develop a very good understanding of the project at the very beginning.

- Reduces the cost for fixing the defect since defects will be found in early stages

- It is a fast method

Disadvantages of V-model

- The biggest disadvantage of V-model is that it’s very rigid and the least flexible.If any changes happen mid way, not only the requirements documents but also the test documentation needs to be updated.

- It can be implemented by only some big companies.

- It needs an established process to implement.

8.Fish model:This is a process oriented company's development model. Even though it is a time consuming and expensive model One can be rest assured that both verification and

validation is done paralley by separate teams in each phase of the

model. So there are two reports generated by the end of each phase one

for validation and one for verification. Because all the stages except

the last delivery and maintenance phase is covered by the two parallel

processes the structure of this model looks like a skeleton between two

parallel lines hence the name fish model.

Advantages:

This strict process results in products of exceptional quality. So one of the important objective is achieved.

Disadvantages:

Time consuming and expensive. 9.Component Assembly Model :

Object technologies provide the technical framework for a

component-based process model for software engineering. The object

oriented paradigm emphasizes the creation of classes that encapsulate

both data and the algorithm that are used to manipulate the data. If

properly designed and implemented, object oriented classes are reusable

across different applicationsand computer based system architectures.

Component Assembly Model leads to software reusability. The

integration/assembly of the already existing software components

accelerate the development process. Nowadays many component libraries

are available on the Internet. If the right components are chosen, the

integration aspect is made much simpler.

This comment has been removed by a blog administrator.

ReplyDelete[URL=http://www.neobux.com/?r=champika01][IMG]http://images.neobux.com/imagens/banner9.gif[/IMG][/URL]

ReplyDeletethanks good article

should I know the books of this article? i will feel gratefulness for your respons

ReplyDeleteadi

should I know the books of this article?Hadoop Training in Chennai i will feel appreciation for your respons.

ReplyDeleteThe SDLC diagram is good best explain thanks for sharing information Software development companies|IT Offshoring and OutsourcingTop IT Companies In India

ReplyDeleteGood post....thanks for sharing.. very useful for me i will bookmark this for my future needs. Thanks.

ReplyDeletesoftware development company

This comment has been removed by a blog administrator.

ReplyDeleteThanks for the notes that you have published here. Though it looks familiar, it's a very good approach you have implemented here. Thanks for posting content in your blog. I feel awesome to be here.

ReplyDeletecloud computing training

Cloud computing training institutes in chennai

hadoop training in chennai

A key component of services offered at SP Web Solution is software development companies in florida. This encompasses the entire gamut of conceptualizing, coding, and designing to ultimately come out with software which fully meets your requirements and that of your customers.

ReplyDeleteVery nice post on project management tools.This post is very much helpfull for the project mangers to get their desired result from the project resources.

ReplyDeleteKeep posting.

software testing institute in chennai

Software Development Life Cycle model is utilized for project management and involves processes from the feasibility Analysis to maintenance of the completed application.

ReplyDeletesdlc review

It is really a great work and the way in which u r sharing the knowledge is excellent.

ReplyDeleteThanks for helping me to understand basic concepts. As a beginner in software testing your post help me a lot.Thanks for your informative article. software testing Training

| software testing Training in chennai

eNvent software Technologies is the trusted IT company in Lucknow. Hire best software developers for providing remarkable custom software development services.

ReplyDeleteSoftware development life cycle (SDLC) is the process of developing a software product. Nice information. Really!!!This is nice blog to gain about Software development services .To develop high-quality software product following the software development cycle.

ReplyDeleteI believe there are many more pleasurable opportunities ahead for individuals that looked at your site.

ReplyDeleteBest Java Training Institute Chennai

Java Training Institute Bangalore

Ciitnoida provides Core and java training institute in

ReplyDeletenoida. We have a team of experienced Java professionals who help our students learn Java with the help of Live Base Projects. The object-

oriented, java training in noida , class-based build

of Java has made it one of most popular programming languages and the demand of professionals with certification in Advance Java training is at an

all-time high not just in India but foreign countries too.

By helping our students understand the fundamentals and Advance concepts of Java, we prepare them for a successful programming career. With over 13

years of sound experience, we have successfully trained hundreds of students in Noida and have been able to turn ourselves into an institute for best

Java training in Noida.

java training institute in noida

java training in noida

best java training institute in noida

java coaching in noida

java institute in noida

Nice blog. Thanks for sharing such great information. Software product development company, Hire product developer

ReplyDeleteIt was defintely mind refreshing blog.

ReplyDeleteSelenium training in Chennai

Selenium Courses in Chennai

best ios training in chennai

Digital Marketing Training in Chennai

JAVA J2EE Training Institutes in Chennai

Selenium Interview Questions and Answers

cloud training in chennai

cloud computing training chennai

Excellent Blog!!! Such an interesting blog with clear vision, this will definitely help for beginner to make them update.

ReplyDeleteData Science Certification Bangalore

Data Science in Bangalore

data analyst training in bangalore

data analytics institute in bangalore

data analysis courses in bangalore

ReplyDeleteI appreciate that you produced this wonderful article to help us get more knowledge about this topic.

I know, it is not an easy task to write such a big article in one day, I've tried that and I've failed. But, here you are, trying the big task and finishing it off and getting good comments and ratings. That is one hell of a job done!

Selenium training in bangalore

Selenium training in Chennai

Selenium training in Bangalore

Selenium training in Pune

Selenium Online training

Really an awesome blog for the freshers. Thanks for posting the information.

ReplyDeleteAndroid Training in Delhi

Android Course in Delhi

very nice post.

ReplyDeleteBig Data Hadoop Training In Chennai | Big Data Hadoop Training In anna nagar | Big Data Hadoop Training In omr | Big Data Hadoop Training In porur | Big Data Hadoop Training In tambaram | Big Data Hadoop Training In velachery

informative post! I really like and appreciate your work, thank you for sharing such a useful facts and information about capability procedure hr strategies, keep updating the blog.

ReplyDeleteBig Data Hadoop Training In Chennai | Big Data Hadoop Training In anna nagar | Big Data Hadoop Training In omr | Big Data Hadoop Training In porur | Big Data Hadoop Training In tambaram | Big Data Hadoop Training In velachery

"I simply wanted to thank you. I do not know what would have I done, as reading this information made things so easy to understand.

ReplyDeleteDigital Marketing Training Course in Chennai | Digital Marketing Training Course in Anna Nagar | Digital Marketing Training Course in OMR | Digital Marketing Training Course in Porur | Digital Marketing Training Course in Tambaram | Digital Marketing Training Course in Velachery

"

I have bookmarked this article page as i received good information from this.

ReplyDeleteDigital Marketing Training Course in Chennai | Digital Marketing Training Course in Anna Nagar | Digital Marketing Training Course in OMR | Digital Marketing Training Course in Porur | Digital Marketing Training Course in Tambaram | Digital Marketing Training Course in Velachery

I found your blog while searching for the updates, I am happy to be here. Very useful content and also easily understandable providing.. Believe me I did wrote an post about tutorials for beginners with reference of your blog. keep sharing!!!

ReplyDeleteAndroid Training in Chennai

Android Online Training in Chennai

Android Training in Bangalore

Android Training in Hyderabad

Android Training in Coimbatore

Android Training

Android Online Training

Really It was nice information. It is very much helpful for the freshers and Software Developers.

ReplyDeleteIf anyone looking for a best Mobile App Development company, Reach Way2Smile Solutions App Development Company in Coimbatore.

Thanks a lot very much for the high quality and results-oriented help. I won’t think twice to endorse your blog post to anybody who wants and needs support in this area. same as your blog i found another one Mobile Marketing .Actually I was looking for the same information on internet for Mobile Marketing and came across your blog. I am impressed by the information that you have on this blog. Thanks once more for all the details.

ReplyDeleteThis is the best website I've seen so far. My name is Martin Guptil, and today I'd like to share my views on Custom Software Development Companies In Austin. By the way, the post is excellent and highly detailed. Continue to provide it. Thank you so much!

ReplyDeletevery good post, i actually love this web site, carry on it

ReplyDeletetesting tools online trainings

selenium online trainings

python online training

SAP ABAP online training

SSAP PP online training

data science online training

teradata online training

Good to see such a nice blog post Best Mobile app development company in USA

ReplyDeleteThis is really helpful content on the lifecycle of software development. Get the best Custom Software Services San Antonio from Quacito LLC.

ReplyDeleteExcellent post. You have shared some wonderful tips. I completely agree with you that it is important for any blogger to help their visitors. Once your visitors find value in your content, they will come back for more Models of sdlc

ReplyDeleteGreat post about software development. When you spoke about software development process, product engineering services companies will helps to complete the process. Product engineering playing an important role in the software development. Keep share more about like this.

ReplyDeleteI really appreciate your post. As a regular blog reader, I am always eager to read fresh blogs because this enhances my knowledge about latest updates in the industry. I motivate you to write more blogs like that. Buying A House From Owner

ReplyDeleteThis is awesome post thank for sharing your post. This is a great post.

ReplyDeleteYou have explained this concept very nicely and I really appreciate your hard work and efforts put into this amazing article so thank you so much.customized project development erp software

Learn More: Mobile App Development Trends

ReplyDeletenice post...

ReplyDeletecustom software development

People often underestimate the complexity of software development. They usually think it requires little more than basic programming skills and some creativity. However, developing software takes a lot of hard work, skill, and understanding of how the should interact with its intended environment.

ReplyDeletesoftware development company

Great Post!!

ReplyDeleteThanks for sharing this post with us. I've had a fantastic experience with outsourcing software development services. The ability to scale up or down as needed, access a global talent pool, and reduce overhead costs has made a huge difference in our project timelines and overall success. It's definitely worth considering!

We are the leading software development company in Bangalore, India with 21+ years of experience, delivering highly scalable custom software development

ReplyDeletehttps://www.carmatec.com/software-development-company/bangalore/

Great Blog and you can also visit CMOLDS Dubai a website development services in Dubai provider with great expertise and skills they are the most unique and advance in this domain.

ReplyDeleteNice Post….!!!! Greps Ai specializes in transforming businesses with cutting-edge technology solutions. Leveraging expertise in Digital Marketing, Chatbot Development, API Development, and Software Development Designing, Greps Ai empowers companies to achieve exponential growth. By integrating AI-driven strategies and innovative software, Greps Ai for Business Growth ensures scalable solutions tailored to meet diverse business needs, fostering efficiency and competitive advantage in today's dynamic market landscape.

ReplyDeleteThank you for this insightful overview of Software Development Life Cycle methodologies! It's clear that choosing the right model is crucial for project success. At FYI Solutions, we leverage these methodologies to tailor our software development services to meet diverse project needs and ensure optimal outcomes.

ReplyDeleteWonderful post! The insights were clear and very helpful. Thanks for sharing such valuable information!

ReplyDeleteAviator Game Development Company

Every point was well-explained and purposeful. Great job presenting it so smoothly. Truly helpful for readers.

ReplyDeleteCasino game development services

Techlancers Middle East is recognized as the best mobile app development company in Dubai, delivering smart, scalable, and intuitive apps that fuel business success. With a team of skilled developers and a passion for innovation, we turn ideas into impactful mobile solutions tailored to your goals. From startups to enterprises, our custom app development approach ensures quality, performance, and user satisfaction every step of the way.

ReplyDeleteVisit CMOLDS a leading web development service company in Dubai.

ReplyDeleteThanks for sharing these ideas about using marble in interior design.

ReplyDeletegeneral contractor France

construction company France

building renovation Francecommercial contracting France

residential construction France

licensed contractor France

turnkey construction services France

project management construction France

custom home builder France

contracting services Paris / Lyon / Marseille

Helpful explanation of backend frameworks.

ReplyDeletePython and Django work perfectly together.

Practice-based learning improves confidence.

I found this

Python and Django course

that focuses on real-world usage.

"Enhance your data visualization skills with our comprehensive tableau software training ."

ReplyDeleteExcellent insights shared in this development and design blog! For agencies in the USA looking to scale efficiently, white label website development is a smart way to deliver high-quality projects while keeping full brand control. Pairing it with white label web design near me solutions helps agencies meet local client demands faster and more effectively. Spyce Media stands out as a reliable partner, offering scalable support that allows agencies to focus on strategy, growth, and long-term client success. www.spycemedia.com

ReplyDelete